Previously, I posed 10 questions to tax and tickle people’s scientific methodology instead of, sigh, whether they know the definition of “photosynthesis” (if we really wanted to tax science knowledge, surely you want to ask about the light-dependent and light-independent redox pathways… No? Okay.). This is not easy – many are classic counter-intuitive examples of reasoning biases – and it’s not taught at school properly.

Anyway, here are the answers. This is long, and each one could take up a post on their own, as they require a lot of deconstruction and explanation.

Short aside: Unlike most posts I write, I’m not linking out to definitions. The jargon is in bold for people to research at their leisure. I did ponder linking each to LMGTFY, but I’m just so damn lazy.

Question 1

Question: The chaya leaf is claimed as a cancer cure. What is the most important part of the article announcing this?

Answer: (c) The experiments they have done

This should have been easy. The only relevant thing is the experiments done to prove the claim. That is ultimately what science is about; demonstrating a hypothesis through correct methodology and experimental processes. You can have all the cool theories you want, but if it doesn’t turn out to be true, then it means nothing. None of the alternative answers mentioned “it makes sense” or “it was a sound theory”, however.

The other answers on offer presented numerous fallacies and things that are very common in the media – however, they are irrelevant. The expert’s qualifications are irrelevant because of the argument from authority fallacy. Ditto the institution. Although who they are and where they come from are a good proxy, and worth raising when people speak outside their field of expertise, they are not directly relevant and have no impact on the validity of experimental work. A nine year-old girl working in a shed can produce science as valid as a professor tenured at Harvard – it depends on how their theories and experiments work (note that the opposite of this, where you assume the unqualified person is better is known as the Galileo Gambit).

The exact same goes for the reporter’s opinion on the piece. Though if the reporter is a qualified scientist, the ‘expert’ in question isn’t, and the reporter is saying it’s all bunk, it’s probably a good indication, but it’s still irrelevant and has no effect on the actual experimental evidence for a claim. Similarly, whether friends have tried something or not is anecdotal evidence – anecdotes are not the same as data because they are prone to so many spectacular biases such as selection bias, selective reporting or cherry picking. Also, the plural of “anecdote” is not “data” (except when it is).

Question 2

Question: Autism correlates with organic food sales. What does this tell us?

Answer: (a) Nothing in particular

The autism and organic food correlation has been around for a while now and has slowly become a classic go-to example for this. The data is reasonably legit, but of course mere correlation is not causation; this rules out answers (b) and (c). From a correlation alone we cannot tell if one causes the other, or which way around it goes. For that, we need to do a controlled experiment, where one variable is altered and we watch the change in the other. The existence of a correlation alone can give us a nice hypothesis to test, but it needs tested in a controlled way first.

Answer (e) is obvious nonsense and constitutes both an ad hominem attack, where you dismiss data and evidence on irrelevant personal grounds, and batcrap crazy conspiracy theory thinking (and no, that isn’t an ad hominem attack, it’s just a statement of fact).

When it comes to answer (d), however, it’s complicated. One can assume a hidden third relationship is at work, something known as a confounding variable or a confounding factor, but that’s not always the case; after all, there’s a correlation between smoking and lung cancer but there is a direct cause that’s been demonstrated there through other studies and good theoretical links, rather than a third hidden variable at work. Alternatively, there may be no causal connection at all, even given very strong correlation. You need at least a decent theory for this – for example, shoe size and reading ability correlate, and the third hidden relationship is age. Therefore the answer is a) the graph doesn’t really tell us anything particularly interesting apart from the fact there is a correlation. How we proceed from there to say something more useful is a matter of doing further experiments and analysis.

Question 3

Question: A company is offering a get-rich-quick scheme. What further evidence do you need?

Answer: (d) Interviews and testimony from the failures

In the ideal world, you want statistics to answer this. You want data. But in this case what we’re looking for is silent evidence, which is a specific corollary to cherry picking and selective reporting. This is evidence where some kind of selection effect stops you seeing it – ergo, the answer is (d).

For instance, suppose everyone who survived a long sea voyage through stormy weather in the 17th century said they prayed on the journey. Of course, what about those who didn’t survive to tell the tale; did they pray also, or didn’t they? This evidence is silenced. In this particular business example, you can sum it up with the phrase “there are no unsuccessful entrepreneurs”. By self-selecting out of the pool of evidence, you can make things look better than they really are. This is also common amongst psychics, where selective reporting and cherry picking means we only get to hear from the predictions that came true – the ones that didn’t are conveniently forgotten.

The other answers allude to confirmation bias. This is where you method for testing focusing on confirming your hypothesis. This sounds counter-intuitive – because, after all, you do want to confirm it, right? – but is actually the wrong way to go about things. Repeatedly looking at where your hypothesis works gets you no-where. You need to find the places where it doesn’t work. Trotting out success stories does nothing, you need to look for the failures to get a better picture.

Question 4

Question: An alarm, which catches 95% of thieves, sounds. What is the odds that it has caught a thief?

Answer: (e) More data needed

This question is about test sensitivity. Simply put, the answer is (e). We need more data; specifically the data on how many false positives it generates. This factor is known as the specificity. If the test generated zero false positives, then the answer would be 100% – but in reality this is almost never the case and all tests generate some degree of false positives, usually as a side effect of having such a high sensitivity. For example, police sniffer dogs usually have a high sensitivity to drugs, but are easily lead by the suspicions of their handlers or distracted by similar, but benign, substances. That produces a lot of false positives.

It is only the sensitivity that we’ve been given in the question, 95%. As an approximation we can say it’ll almost certainly be less than 95%, but without that false positive data we cannot be sure. This effect is alarmingly counter-intuitive. Question 8, below, goes into this effect in way more detail.

Question 5

Question: Some men are tall. True or false?

Answer: False

Here, we have a logical syllogism. A fun thing to do in logical arguments is to swap around the nouns used in the premises with something else, because this helps highlight a formal logical fallacy. A formal logical fallacy is a breakdown in the reasoning, so should be fallacious no matter what we put in there as variables. In this example we’re talking about three properties and how they are related; “men”, “tall” and “doctors”. We could simply make this generic and replace them with X, Y and Z.

- Premise 1) Some X are Y

- Premise 2) Some Y are Z

- Conclusion) Some X are Z

Now it becomes a little clearer that there’s a problem. The premises don’t formally assess the relationship between X and Z. We can highlight it further by inputting values where we know that the conclusion will be wrong:

- Premise 1) Some men are doctors

- Premise 2) Some doctors are women

- Conclusion) Some men are women

Quibbles about the gender binary aside (the real world is always slightly fuzzier than pure logic suggests) this is obviously incorrect. We only get tricked into thinking the logic relating “tall”, “men” and “doctors” is a valid piece of logic because the conclusion happens to be true outside the context of the argument. Separating the formal and informal aspects of a logical argument, therefore, is an essential skill in order to spot flawed reasoning.

This context-dependent assessment of reason is common in all of us. In the classic example of the Wason Card Problem, the version using abstract letters and numbers, or colours and numbers, easily trips most people up; but a context-driven example involving ages and whether someone is legally drinking alcohol results in people getting the answer right every time.

Question 6

Question: 15 people drop out of a drug trial. What is the effect?

Answer: (b) Weaken the study because we lose information from the drop-outs

This is basic Ben Goldacre style stuff. It’s known that trials that do not report their drop-out rates overestimate their success, and not reporting these loses is a way to cover-up potentially disastrous results. This is vastly important because correct data from medical trials save lives. Knowing as much about a medical trial as possible, therefore, is essential to assessing its validity.

So consider the following: Why would people drop out? Because the drug affected them badly? Because it simply didn’t work and they had to be excluded? Because of some other ethical problems with the administration of the trial? Perhaps something else.

A large drop-out rate is one of the biggest alarm bells for a medical trial, and so the answer is (b). Hopefully I don’t need to state why (a) and (d) are incorrect here, but (c) is incorrect because the statistical significance of a study is proportional not just to the size of a group but to the size of the effect. If we’re looking for a small, subtle effect on the order of 1% we need a large number of people. If the effect is huge and dramatic, we need relatively fewer people to say it’s significant. Therefore dropping the number in a group from 40 to 25 would not necessarily alter the significance of the outcome – but it does suggest that if those 15 dropped out for a particular reason, the trial’s conclusions will be erroneous.

This is also related to an issue called publication bias. This is where studies that come up negative simply aren’t published or released to the world. It’s another form of selection bias that prevents us seeing a full picture of all the data we have available. If people drop out of a study because a drug didn’t work for them, the final report will over-estimate its effectiveness. If studies aren’t published because they show negative results, our meta studies (where we look at multiple published studies in unison with each other) will also over-estimate the effectiveness. Thankfully, there are ways to spot these biases and they are implemented in meta studies.

Question 7

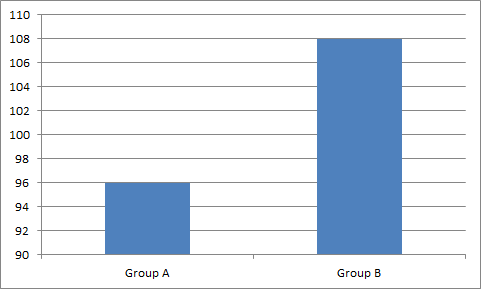

Question: Why is the graph misleading?

Answer: (c) Because it doesn’t start at zero

So, another graph question. First of all, I just made it up. It’s bollocks data, it’s just for illustration. Yet it illustrates things that have been seen on news reports quite frequently. The answer is c) the graph does not start from zero. Now, sometimes there are good reasons for this, but often it’s there deliberately to convey a political message. Namely, it emphasises differences where the differences may, in the grand scheme of things, be minuscule. That’s not to say they’re not significant, nor does it say they’re not real; it just robs them of a wider context. This kind of manipulation is all too common.

Obviously, (a) is incorrect because we can kinda assume it’s a percentage based on context (although meaningless graphs do crop up in pseudo-science). (b) is incorrect because it isn’t continuous data. (d) is completely irrelevant (it’s illustrative data, and we assume “A” and “B” are defined properly elsewhere) and (e) is just making stuff up – there is no such thing as some subliminal bias that you introduce just through colour. Colour perception doesn’t work like that (though, interestingly, different coloured pills do produce changes in the placebo effect).

Question 8

Question: Which test is better?

Answer: (e) It’s complicated

As mentioned above, this is related to question 4. We’re talking about test sensitivity again – except in this case it’s being very explicit about the existence of false positives. Indeed, the question gives you enough figures to work out the actual test sensitivity if the question was asked exactly as before. If you work it out for both tests, the new and old, the odds of the result being a true positive if the test says “yes” (the rate of what are known as Type I errors) come up at roughly the same for both.

A short aside: In fact, the figures given in the question were rounded off from ones I generated after a bit of fudging with a spreadsheet to make this the case: a test with 63% selectivity and 1.1% specificity has about the same sensitivity as a test with 99% selectivity and 1.8% specificity, and these figures just about render the sensitivity difference between the two independent of any realistic background rate (or prior probability in Bayesian jargon). The actual chance of a true positive if the test says “yes” works out to about 36% in both of these cases. So, the question uses rounded off figures of 99% and 65%, and 2% and 1% respectively. The specificity here seems quite negligible, but you’re actually doubling the rate of false positives from one test to the other. Therefore the final test sensitivity is very susceptible to small changes in this value.

So, in principle, the answer is (c) if you do the arithmetic. In terms of real-world effect on how good the test is in terms of Type I errors, they come out the same.

However, and this is a big HOWEVER, the real answer is (e). It really is much more complicated than that. The new test, with 99% sensitivity and 2% specificity, is marginally worse because of its false positives – the test sensitivity, the rate of true positives, drops from 40% to 33%. The new test also produces a larger number of false positives in real terms – twice as many. This sort of thing leads to complications. It adds to psychological trauma as more people are hit with positive test results, and have to end up undergoing further tests that can often be very invasive (such as biopsies), that may transpire to be unnecessary. There are also further practical cost implications to go with further testing of more people – even though the overall proportion of false positives remains roughly the same. But on the flip side, fewer people slip by the test through false negatives (only 1%, in fact). This means despite the cost and psychological implications of the extra false positives, the new test will likely save lives.

How you balance between those is a difficult, and indeed very complicated, decision, and also requires looking into a lot of variables such as how you target and pre-screen your testing based on demographic data, and how you refer to people for further testing. These are all very real considerations, however they’re rarely, if ever, raised in newspapers that discuss the effectiveness of medial treatments and interventions.

Question 9

Question: All toupees look fake. How do you evaluate this statement?

Answer: (b) Examine real looking hair

This is informally known as the toupee fallacy. No, really, look it up. It is another specific case of hidden and silent evidence, and a test of confirmation bias as alluded to in Question 3, above.

First of all, woe be unto anyone who chose (e). Semantic definition games are where we need to roll up a newspaper and bash you on the nose while saying “NO” in a firm tone of voice. Just no. You cannot simply “define” something as true, otherwise your statement is meaningless. A more long-winded justification of this can wait for another day, though.

Next (d) – no. This is wrong. You can evaluate the statement because you can evaluate any statement where the real-world results are defined correctly. This is where I differ greatly with (read, “I’m right, they’re wrong”) with people who claim that prayer can’t be tested with science. No. If you say “something has effect [X]”, then we can go looking for effect [X]. If you want to claim that toupees look fake, all you need is a suitable definition of a toupee (easy) and a consistent definition of what you consider “fake” (slightly less easy, but still doable). It’s very possible.

So, (a) – examining “fake” looking hair. Well, if you keep examining fake hair, you will find bad haircuts and toupees. That’s confirmation bias at work. You have proven nothing since you haven’t looked at cases where your hypothesis may fall down. And technically this is also true of answer (c). You’re examining both, you’re wasting your time. What you need to do is just examine real looking hair – because you might find a toupee that doesn’t look fake. You can only disprove your hypothesis by looking for things that will break it, and only by attempting to break it can you be sure it holds true. You cannot disprove that toupees look fake by examining only fake hair, you must try to find a toupee amongst the real looking things.

Question 10

Question: Two people died after recieving a new vaccine. What evidence do you need to evaluate this?

Answer: (b) The rate of vaccine uptake

We end with typical and all-too-common newspaper scaremongering. Remember; anecdotes are not evidence. So you need data, not stories. That means if you’ve answered (e), you need to take a course in critical thinking. Just like with correlation, anecdotes can give us ideas and hypotheses to test, but they are not in themselves sufficient evidence. If we recall life-saving drugs on a whim because of anecdotal evidence that isn’t backed up by data, we can cause more harm precisely because those drugs are life-saving. This isn’t something we should do on a hunch to placate the public because it could cost lives.

So we need data, but we need to ask what data is relevant. The fact I’m saying this should immediately point out that the answer isn’t (d). We don’t need all of it. Indeed, the answers (a) and (c) aren’t relevant to the question – precisely because we’re not looking at flu mortality. People die in flu epidemics, that rate might say something about the efficacy of the medications, but it says nothing about the safety of the medications. We’re not looking at flu related deaths, we’re looking at vaccine related deaths. Flu mortality is not even a confounding variable, it’s an irrelevant variable. You only need b) the rate of vaccine uptake in that demographic. I threw in option (d) as an intentional red herring; this is not that complicated a question.

I’ve been cheeky here, though; you do need one more piece of data that isn’t listed and that’s the death rate of that demographic. You can look this up in an actuarial table so let’s assume it’s given knowledge. This death rate works out to about 1% for this demographic. If 1% of people over 60 are expected to die anyway, then whether 2 individual deaths is a significant event depends only on the rate of vaccine uptake. If only 2-3 people took it and 2 died, we might be onto something – the vaccine uptake rate is the key piece of evidence. However, this is an unlikely scenario. If 50% of the population took the medical intervention, that could be millions. And 1% of a million is 10,000. If tens of thousands of your demographic were going to die anyway, of any cause, then two cases is hardly a significant event. Of course, you need to re-work those figures from the per-year rate to a per-day rate to figure the odds of anyone dying on the same day or the day after they receive a vaccine, but tens of thousands divided by 365 (not taking into account seasonal variations) still isn’t going to make 2 reported deaths anything more than coincidental.

In fact, we’d be expecting potentially hundreds of people do die the day they were vaccinated anyway, so if only two died after taking the vaccine, it would be significant evidence that this magical elixir actually stops you dying. Now that is counter-intuitive.

Conclusions and Further Work

Well, if you got to the end of this, congratulations. Maybe it was enlightening, maybe it was revision. I’m grateful to a few people for discussions of topics, contexts and statistics. You know who you are.

Complaints to the usual place.

“Influencing” is a long word. Who the hell thinks they could possibly fit “influencing” in that gap and so willingly chooses to break up a word with a hyphen when handwriting? No, really. Who the hell does that? If Bill Nye can influence anyone in a positive way, it should be to avoid being this short-sighted and stupid.

“Influencing” is a long word. Who the hell thinks they could possibly fit “influencing” in that gap and so willingly chooses to break up a word with a hyphen when handwriting? No, really. Who the hell does that? If Bill Nye can influence anyone in a positive way, it should be to avoid being this short-sighted and stupid.